Room Sync

Virtual reality systems use different types of tracking methodology which each have different strengths and weaknesses.

One notable strength of Valve's Lighthouse tracking system is that the reference points to which position is derived are static.

What this allows is accurate position between two tracked objects within a shared playspace, as the reference points they derive their position from is the same, a shared pair of two Lighthouse units.

With this, you can synchronize players to their avatar in real space in relation to each other. If you see someone in the virtual world and lift up your headset, they will be standing in the same place in the real world. If you touch someone where their head appears in the game, you will touch their head in real life.

We call this "Room Sync".

Here's how it works in layman terms:

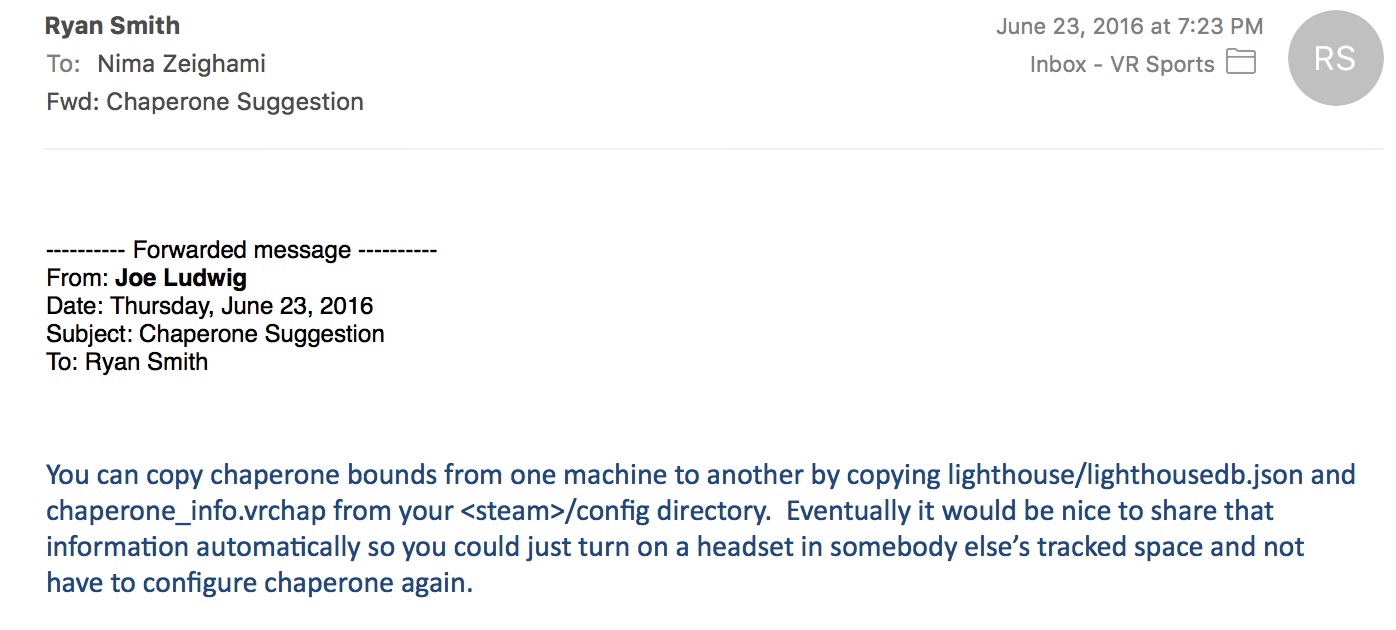

1. You configure the HTC Vives with the exact same playspace and forward direction in SteamVR room setup. You can do this by hand on each machine with room setup, or preferably you can pass the exact settings between machines using the below method. Ryan Smith of Invrse Studios and Joe Ludwig of Valve were friendly enough to share this info.

2. You launch a VR experience capable of Room Sync, which is any room-scale program in which two players connect over a network, and the players spawn in relation to the center of the shared virtual space. For example, imagine a racquetball court where a player plays against a wall. If both players spawned in relation to the center of the court(assuming there aren't space constraints). This requires clever network engineering to make sure there's as little latency as possible to keep people from hitting each other, though over a local network it's not an issue.

Once that occurs, you can no longer "ghost" through the other player, and you must be wary of giving them space. This method of virtual connectivity has a variety of compelling applications such as competitive virtual sports(vSports), teledildonics, and social apps.